Dominates AI training and inference with its GPU and data center accelerator platforms including H100, B200, and GB200. Commands ~89% of the AI accelerator market with $130B+ annual revenue run rate and a $3T+ market cap. Aims to power the entire AI compute stack from cloud training to edge inference, autonomous vehicles, and robotics.

The undisputed king of AI compute — designs the GPUs that train virtually every major AI model.

Key Products & Platforms

H100

Data Center GPUCurrent workhorse for AI training

B200 / GB200

Data Center GPUNext-gen Blackwell architecture

A100

Data Center GPUPrevious-gen, still widely deployed

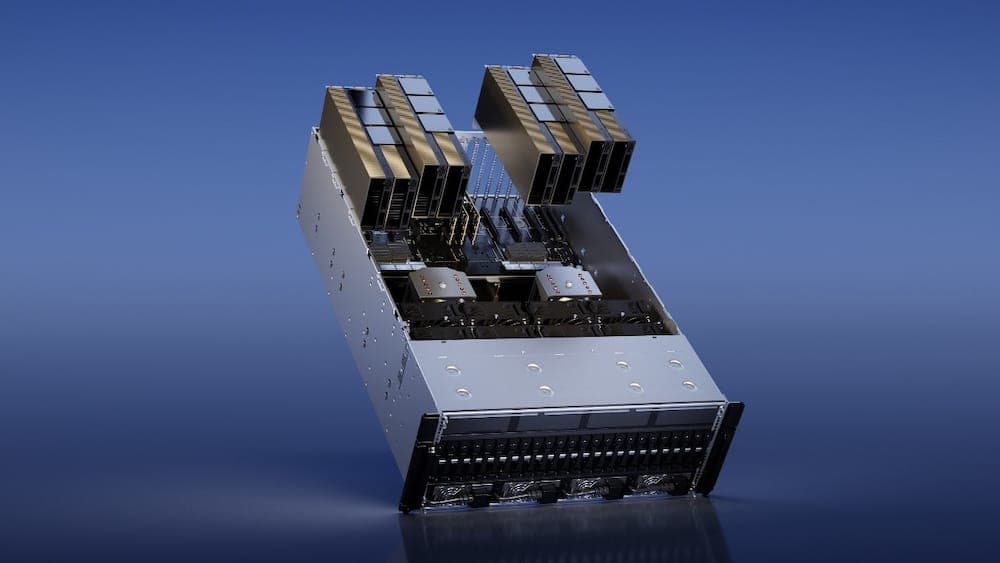

DGX Systems

AI SupercomputerTurnkey AI training servers

CUDA / cuDNN

Software PlatformIndustry-standard AI dev ecosystem

DRIVE Platform

Automotive AISelf-driving compute platform

Key Customers

Competitive Position

Market Share

~80% of AI accelerator market

Competitive Moat

CUDA software lock-in, 15+ years of ecosystem development

Key Risk

Customer concentration in hyperscalers; custom chip competition from Google TPU, Amazon Trainium

Why This Company Matters

If you want to understand AI infrastructure, start here. NVIDIA's GPUs are the foundation of virtually every AI system — from ChatGPT to autonomous vehicles. Their dominance is unmatched in tech.